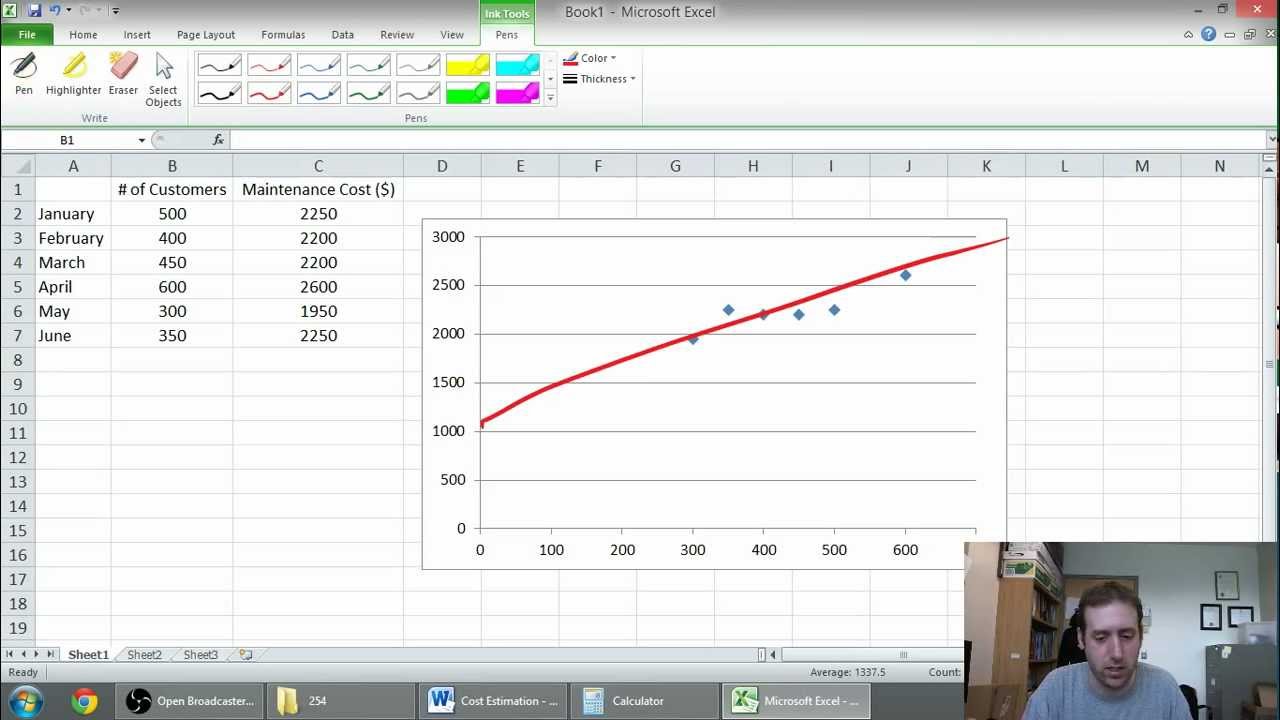

Some caution is needed in using such a model. The equation of the regression line equation and the S yx statistic can be used together to produce a stochastic model of the relationship between X and Y, as follows: The denominator ( n-2) is used, instead of the ( n-1) we have seen before for sample standard deviation calculations, because two values m and c have been estimated from the data to determine the equation values, and we have therefore lost 2 degrees of freedom instead of the 1 degree of freedom usually lost in determining the mean. These errors reflect the true variability of the dependent variable y from the least squares regression line. This is equivalent to the standard deviation of the error terms e i. The standard error of the y-estimate S yx is calculated as: The t statistic follows a t-distribution with ( n-2) degrees of freedom (provided the linear regression assumptions of Normally distributed variation of y about the regression line hold) which is used to determine whether the fit should be rejected or not at the required level of confidence. The value of r is used to determine the statistical significance of the fitted line, by first calculating the test statistic t as: The sum of squared errors between the observed and predicted y-values, tends to zero, so r 2 tends to 1 and therefore r tends to -1 or +1, its sign depending on whether m is negative or positive respectively. It ranges from -1 to +1: a value of r = -1 or +1 indicates a perfect linear fit, and r = 0 indicates no linear relationship exists at all. R provides a quantitative measure of the linear relationship between x and y. only one independent variable), R 2 is equivalent to the simple correlation coefficient r 2 Where SSE, the sum of squares errors, is given by:Īnd TSS, the total sum of squares, is given by:Īnd where are the predicted y values at each x i:įor simple least squares regression (i.e. The fraction of the total variation in the dependent variable that is explained by the independent variable is known as the coefficient of determination R 2, which is calculated as: Where are the mean of the observed x and y data and n is the number of data pairs ( x i,y i). The simple least squares regression model determines the straight line that minimizes the sum of the square of the e i errors. That greatly extends the applicability of the regression model but one must be particularly careful that the errors are reasonably Normal, and one runs an enormous risk in using the regression equations to make predictions outside the range of observations. Log(Y), √X) to force a linear relationship. Statisticians often make transformations of the data (e.g. The means of the distribution of y at each x value can be connected by a straight line y = mx + c.Īssumptions behind least squares regression analysis The distribution of y given a value of x has equal standard deviation for all x values and is centered about the least squares regression line

LSR makes four important assumptions:įor each x i, there are an infinite number of possible values of y, which are Normally distributed It is probably particularly common because the analysis mathematics are simple (because of the Normality assumption), rather than it being a very common rule for the relationship between variables. Simple least squares linear regression is a very standard statistical analysis technique, particularly when one has little or no idea of the relationship between the x and y variables. Where m is the slope of the line and c is the y-axis intercept and s is the standard deviation of the variation of y about this line. If we assume that the error terms are Normally distributed, the equation reduces to: Simple least squares linear regression assumes that there is only one independent variable x. = the regression slope for the variable x j and the difference between the observed y values and that predicted by the model) = the i th observed value of the dependent variable y = the i th observed value of the independent variable x j The purpose of least squares linear regression is to represent the relationship between one or more independent variables x 1, x 2, and a variable y that is dependent upon them in the following form: